rsc's Diary: ELC-E 2018 - Day 1

This is my report from the first day of Embedded Linux Conference Europe (ELC-E) 2018 in Edinburgh.

Keynotes

The morning of the first ELC-E day started with keynotes; I used the time to visit the booths. Unfortunately, there are not that many embedded topics around, so it was a quick walk. I tried to visit Jonathan Corbet's kernel keynote, but it was already full, so I watched the livestream. Jonathan talked about long term kernel stability, and it was good to see that he is proposing to use modern mainline kernels (or at least the latest "stable" kernels) instead of trying to use those pretty old longterm kernels - that's basically what we at Pengutronix tell our customers for a long time now.

Later, in the evening, I watched the video of the LF Energy project. I have no idea what they want to do: a talk without any content, and anyone who is interested shall come to an extra $200 session on Thursday. Really, LF...?

prplMesh

The first technical talk I heard on day 1 was Arnout Vandecappelle's talk about "prplMesh: An Open-Source Implementation of the Wi-Fi Alliance Multi-AP Specification". Basically, the standard provides a possibility to connect different access points by WiFi instead of a cable. There is a concept of a single "controller agent" per network - the device that has an upstream link. Controller agents propagate their availability through the network.

The first-time authentication works similar as WPS, routed over the network. The Multi AP standard has been finished, so the first vendors start implementing it. The idea of the carriers is that they have full control over people's networks, so that normal folks can call their helpdesk and they have full management possibilities.

The prpl stack sits in Linux userspace, provides features for configuration, multicast, routing, QoS and meshing and provides a high level API for upper layers. The reference implementation for Linux is open source and ready to be WiFi certified, but they see the controller agent as a differentiator, so that part isn't open source.

The prpl mesh system works with cfg80211 and a patched hostapd. Some features are still missing, such as end-to-end authentication/encryption or QoS, but those parts are being worked on and should be fixed in their 2nd release.

The Modern Linux Graphics Stack on Embedded Systems

My colleague Michael Tretter then talked about the recent Linux graphics stack. In contrast to desktop environments (which have been dominated by X.org for the last 30 years), embedded setups have a set of new challenges; this is where wayland comes into the play. While wayland is mainly about the protocols, the compositor does the actual work, being complemented by the wayland "shell", which is the visible part. All major toolkits (Qt, GTK+, Chromium) have been ported to wayland. In contrast to X11, the toolkit directly talks to Mesa/OpenGL and the compositor does only care about compositing.

On embedded systems, we have limited hardware resources, memory bandwidth and maybe even (battery) power. This results in different hardware accelerators on embedded systems. In many cases, the different parts of the graphics hardware are from several IP core vendors, which often results in incompatibilities of the used memory formats.

One important mechanism for saving memory bandwidth is the dma-buf framework: it makes it possible to hand over hardware memory buffers from one component to the other without actually copying memory. A second important mechanism is atomic modeset, which makes it possible to change all components of a graphics format at once. Although the drivers support many of the accelerated features, it is a challenge to make those mechanisms available for the upper layers of the stack. Weston, with its DRM backend, turned out to be a good candidate for that task: it takes care of dma-buf, atomic modesets, overlays and format modifiers. Currently directly putting tiled buffers on an output plane is not supported yet.

While Weston provides a default shell, on an actual embedded system you might want to write your own shell - in the end, it's the thing visible to the user. There are several example shells in the code base like the Desktop Shell, the IVI Shell or the Fullscreen Shell. It turned out that the IVI Shell, initially written for in-vehicle-infotainment, might be a good candidate for other kinds of embedded systems as well, so Michael took a closer look. Unfortunately, the xdg_shell protocol was not implemented, so normal applications couldn't be used; however, there is a patch set available. Michael took that and replaced the HMI controller with a custom plugin and made it possible to hook in a selfmade user interface.

Looking for alternatives, there is another framework available for writing own compositors, called wlroots, which is a modular wayland compositor library (used by Sway, Phosh and Rootston). In his opinion, the problem with wlroots is that it doesn't make use of overlay planes and format modifiers. For people writing their visible components in QML, Qt Compositor is also available, but does also not use atomic modeset and format modifiers.

In conclusion, Michael is currently not unhappy with the possibilities Weston provides.

Developing Open-Source Software RTOS with Functional Safety in Mind?

In my first afternoon session, I listened to Anas Nashif talking about Zephyr and safety. He outlined that open source software can in general be used for safety critical systems, but one must take care that the safety aspect is taken into account early in the project's life cycle - otherwise things might become really expensive.

While Zephyr has a small trusted code base and offers all the core features of an realtime operating system, safety standards require a V-model and traceability from requirements to the code; it turned out that this is difficult to achieve for open source projects which are developed to a more bazaar like development model: for every line of code, you need a requirement, documentation and testing. The group has used doxygen to scan and document all these things, and it turned out to be a lot of work.

For coding standard, Zephyr uses the MISRA-C guidelines; MISRA-C requires functions to have a single point of exit at the end, which turns out problematic from time to time.

One of the issues people have with open source is that there is always the question "who is liable if something goes wrong?". Even if you have a codebase that fulfills the requirements, nobody wants to be the first one using it.

Currently the best way of doing safety with Zephyr turned out to start with snapshotting the source tree, then validating it, specify which features to use and automatically track and document it - basically building a cathedral on top of the bazaar. It is also a good idea to get a proof of concept approval from a certification authority as early as possible. In his point of view, the ideal project has a split development model: a flexible open source instance, plus an auditable and controlled instance. In this setup, the open source community helps to enrich the open instance, while the owning entity maintains the auditable instance and takes on the certification qualification overheads. He explained that SafeRTOS did something similar on top of the FreeRTOS codebase, but not in public, and they want to stay in public with their own Zephyr based codebase. Their roadmap to FuSA & Security pre-certification contains things like limiting the scope - i.e. by concentrating on a PSE52 environment.

My conclusion was that I'm still not convinced by Zephyr from a political point of view: one of the strengths of Linux is that the GPL enforces everyone in the industry who makes actual use of Linux to have equal rights and possibilities. With the Apache 2 license of Zephyr and with people doing safety processes behind closed doors, my expectation is that they happily take contributions from the community while doing lucrative things like safety projects which nobody else can do, because the safety process is not open.

100 Gbps Open source Software Router? It's Here

Then Jim Thompson talked about high bandwidth routing in the 2nd afternoon session. It turned out that there is an increasing demand for open source router platforms - mainly because people want to include routers in their standard IT automation and monitoring systems instead of relying on proprietary offers.

It turned out that filling network pipelines at 100 Gbps speed contains a whole set of engineering challenges. The solution he proposed is based on commodity hardware (water cooled and overclocked), offload network cards (Mellanox) plus a userspace control and dataplane stack, including userspace network card drivers, using the DPDK, VPP, Clixon, Strongswan, FRR and Netgate software stacks. With that setup, they bring the cost of such a router down to $7,500.

Linux on RISC-V

Next, Khem Raj explained the current status of the RISC-V Linux port. After showing the feature set of the instruction set architecture he talked about the state of the toolchain, being developed with an "upstream first" strategy. Binutils is supported since 2.28, the RISC-V port landed in GCC 7.0 and in glibc 2.27. As there is still not much hardware available, it is important that QEMU 2.12.0 supports RISC-V as well. The RV64GC-LP64 little endian ABI is the current standard for the Linux port; currently the number of Debian packets ported to the architecture is increasing and reached a fairly nice level. Open Embedded is also providing good coverage of the architecture and supports QEMU and the SiFive Freedom U540. However, some key packages are not compiling yet, like GStreamer.

Other tools make progress with RISC-V support as well, such as LLVM, OpenOCD and GDB; there is also an ongoing effort for support in UEFI, Grub, V8, Node.js and Rust. As there is still a lot of work to be done, the project is searching for more contributors.

Primer - Testing Your Embedded System

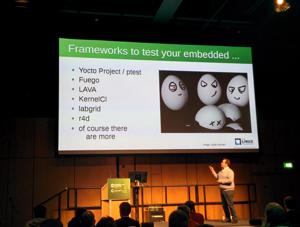

As we have initiated the labgrid project for automated testing, looking at what's happening in the Linux test community is always interesting.

Jan-Simon Möller then talked about "Primer - Testing Your Embedded System". He looked into a set of testing frameworks, starting with Yocto's ptest, a mechanism to package the results of 'make test' of a package "foo" into a package "foo-ptest", transfer and then execute them to the target. Unsurprisingly, ptest is well integrated into Yocto/OE (although some sysroot path fixup needs to be done manually in order to make the tests run on the target). Currently, about 64 ptest packages are in the OE codebase. However, the output is quite large, a full run takes a lot of time (such as 5 hours) and the resulting visualization needs postprocessing.

The next test framework he tested is Fuego, an automated testing system for embedded targets from a host with 100 pre-packaged tests. Fuego is a Jenkins instance already pre-loaded with the tests; it can also build and upload the tests to the target, then parse and visualize the results. A big plus is that there are many tests out of the box; the system needs no prerequisites on the target besides ssh and the test results can be nicely graphed in Jenkins. On the negative side, it assumes the board is local, deployed with filesystems and each board needs a separate configuration.

The next system to look at was LAVA, started by Linaro. LAVA manages the deployment of filesystems to a board farm, then power on, boot and execute the tests on the DuT. Currently, about 150 types of boards are supported and LAVA scales nicely towards large numbers of devices-under-test. Multiple labs are supported as well. However, the initial setup is hard and the test result parsing is not easy.

He then looked into KernelCI, which is about test aggregation and visualization. The test results are collected in a JSON format and uploaded to the kernelci.org visualization web interface. Most of the labs run LAVA, but the upload is independent of the tools. Meanwhile, in addition to boot testing, KernelCI does also care about actual runtime tests. The setup stays difficult.

The next system Jan-Simon looked at was labgrid. It was started by Pengutronix and it is an abstraction of hardware control layer for testing embedded Linux systems. In addition to automated testing, it makes boards accessible for a developer for interactive use. His positive findings are that it's easy to get automated and developer access and abstracts the hardware nicely. On the negative side, integration with test tools (LAVA/Fuego) and the setup with pip were difficult. The docs are quite good. Those findings are of course extremely helpful for us, because seeing what kind of issues people have with labgrid helps us improving the codebase; some of the setup issues he has seen might already be solved in more recent versions.

Next, r4d was analyzed, which is used by the PREEMPT_RT folks. It integrates into libvirt, so switching boards on and off feels like switching virtual machines on and off, which is nice. However, the libvirt patches are not in upstream yet.

All in all, his conclusion was that the frameworks all have their own strengths; collaboration and test result aggregation stay the most important thing to do.

BoF: Embedded Update Tools

The last session of the day I participated in was my colleague Jan Lübbe's "BoF: Embedded Update Tools". Instead of a talk, Jan decided to offer a BoF, in order to hear more about what the attendees need in the embedded updating area. Good tools should make sure the intended software ends up on the devices in a fail-safe and secure way. The existing image based tools like SWUpdate, Mender, RAUC etc. or the file based tools like OSTree and SWUpdate (used by Clear Linux) provide all that. For the BoF, Jan wanted to focus on image based mechanisms (in contrast to systems like deb/rpm/opk based systems or systems that focus on container updates).

The discussion was very active and people talked about their user stories. From the content point of view, too many aspects have been discussed for this short overview article, so this might be a topic to another blog post.

Weiterführende Links

Girls' Day 2026

Unter dem Motto "Open Source - Open Future!" waren im Rahmen des Girls' Day am 23. April 2026 vier junge Frauen bei Pengutronix zu Gast. Zu Beginn gab es eine kurze Vorstellung von Pengutronix und natürlich die Antwort auf die Frage, was eigentlich dieses Embedded ist.

Pengutronix auf der embedded world 2026

Treffen Sie uns auf der embedded world 2026 in Nürnberg! Sie finden uns wieder in Halle 4, Stand 4-261. Wie üblich zeigen wir auf unserem Messestand Demonstratoren zu aktuellen Themen. Darüber hinaus laden wir in diesem Jahr wieder zum RAUC- und Labgrid-Community-Meetup ein!

Pengutronix auf der SPS in Nürnberg

Nach einigen Jahren Abwesenheit sind wir in diesem Jahr zurück auf der SPS 2025 in Nürnberg! Sie finden uns in Halle 6, Stand 6-350C. Wir freuen uns darauf neue und bekannte Freunde, Partner und Kunden zu treffen. Wie immer zeigen wir Demonstratoren zu aktuellen Themen an unserem Messestand.